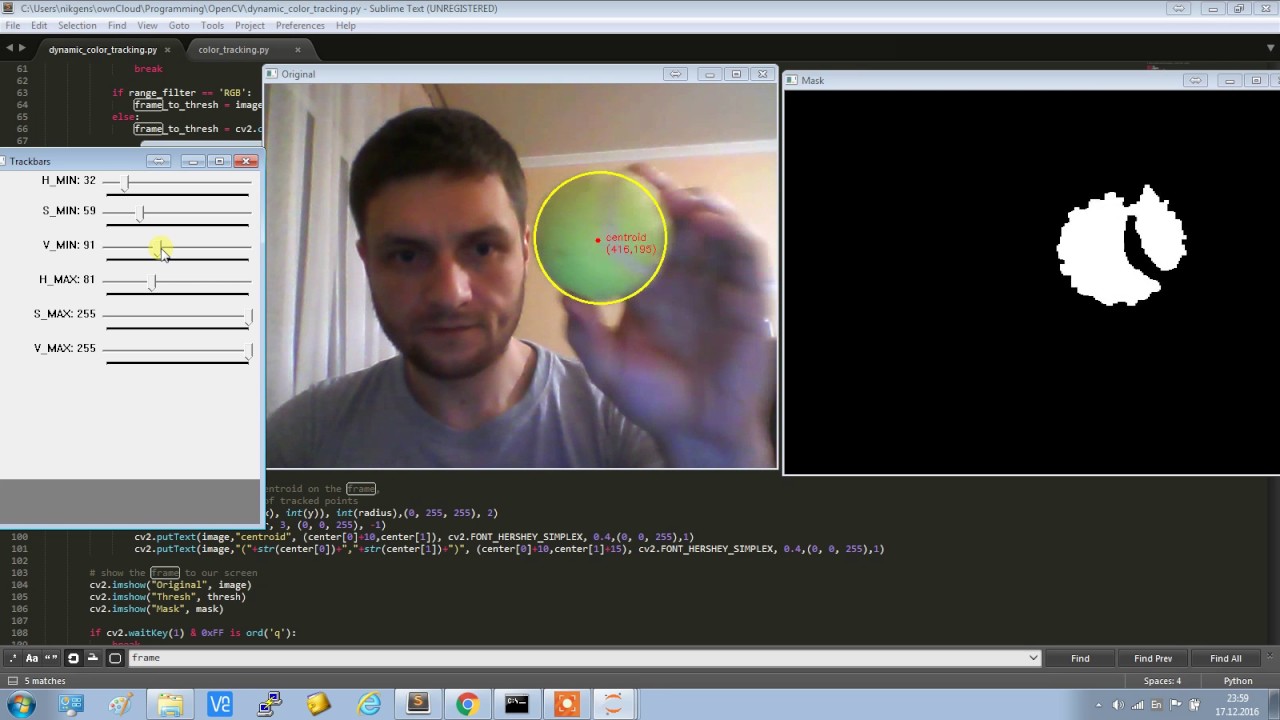

(see here for a review paper on hand pose estimation from the HCI perspective). For example, these algorithms might get confused if the background is unusual or where sharp changes in lighting conditions cause sharp changes in skin color or the tracked object becomes occluded. extracting background based on texture and boundary features, distinguishing between hands and background using color histograms and HOG classifiers, etc) making them not very robust. Incidentally, many of these approaches are rule based (e.g. There are several existing approaches to tracking hands in the computer vision domain.

Motivation - Why Track/Detect hands with Neural Networks? 11 FPS using a 640 * 480 image run while visualizing results (image above)Ībove numbers are based on tests using a macbook pro CPU (i7, 2.5GHz, 16GB).16 FPS using a 320 * 240 image run while visualizing results.21 FPS using a 320 * 240 image, run without visualizing results.

I then tried the Egohands Dataset which was a much better fit to my requirements (egocentric view, high quality images, hand annotations).

I experimented first with the Oxford Hands Dataset (the results were not good). As with any DNN/CNN based task, the most expensive (and riskiest) part of the process has to do with finding or creating the right (annotated) dataset.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed